The Loop That Optimizes Anything

Why LLMs changed the hardest step in continuous improvement.

Progress in modern AI comes from fast empirical cycles: run more experiments, measure cleanly, keep what works, discard what does not. The moat in AI is not raw data alone, but a data engine — repeated data acquisition, retraining, evaluation, deployment, and telemetry.

In Karpathy’s practical guide to training neural networks, the same principle shows up again: simplify aggressively, trust reproducible metrics, form concrete hypotheses, and validate them step by step.

This idea generalizes to all other domains.

The human sets the objective, defines the constraints, and decides what kind of search is acceptable. The machine does the exhausting part — generating variants, running bounded tests, logging outcomes, and updating the baseline.

Once you see it that way, the pattern is everywhere.

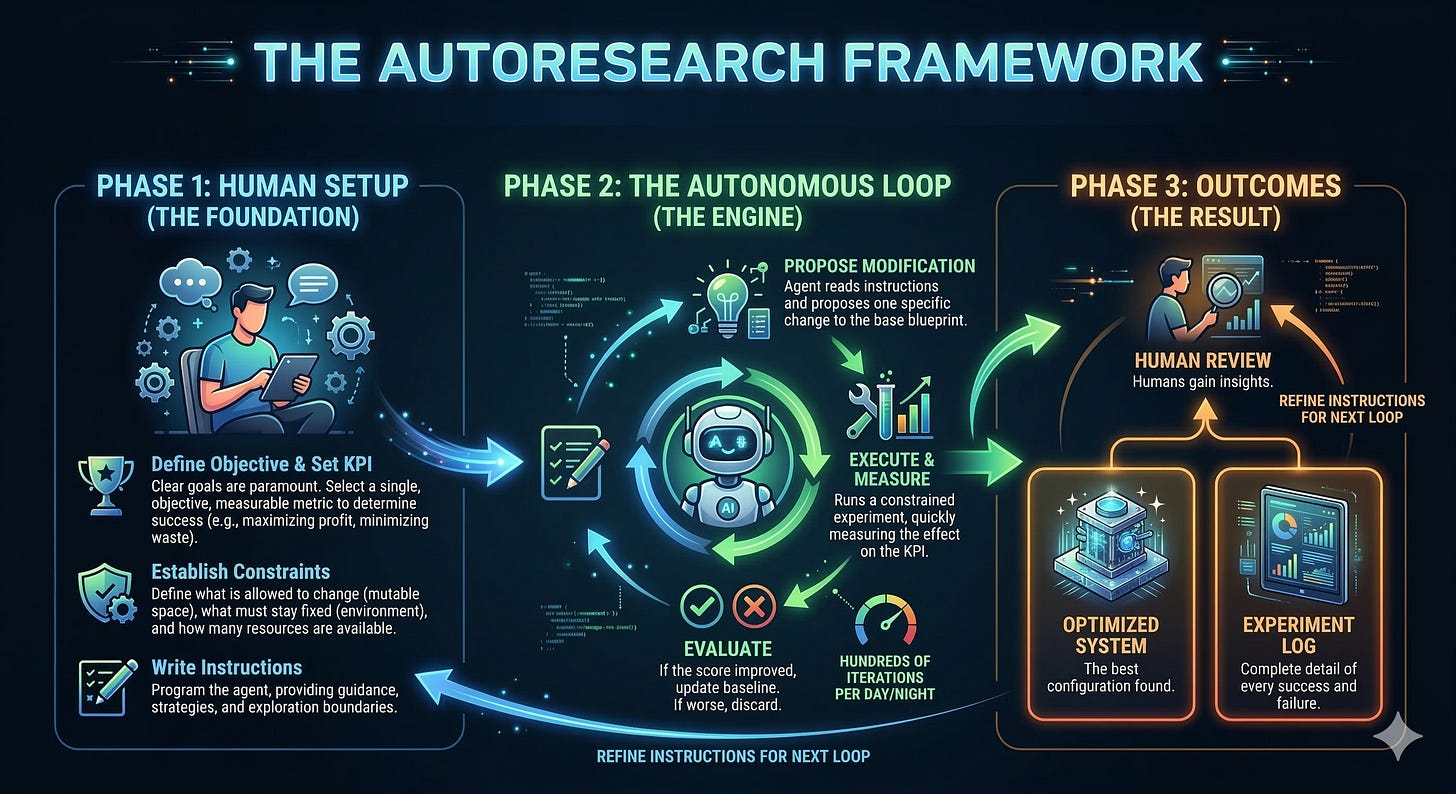

The 3-step framework

First, a human defines the game.

You choose the objective. You choose the metric. You choose the constraints. If the score is vague, delayed, or easy to game, the loop will optimize nonsense with terrifying efficiency. Karpathy’s own training recipe is explicit on this point: you need a metric you trust, baselines you can beat, and evaluations that are reproducible before you start scaling the search.

Then the system enters the loop.

An AI agent proposes one change. Run one test. Measure against the control. Keep the change only if it improves the target metric under the agreed constraints. Repeat until gains flatten, costs rise, or the objective changes.

Then a human reviews the trace.

The output is a map of the search space — what failed, what transferred, what broke, what surprisingly worked. That experiment log is where judgment compounds.

Why this works

Because reality is a harsher editor than opinion.

Most teams debate their way around uncertainty. The loop does the opposite — it converts disagreement into testable variation. That is why it is so effective. It does not require perfect foresight. It requires a scoreboard.

It also forces honesty.

A loop with a trusted metric is brutally clarifying. It tells you whether the elegant idea actually helped, whether the ugly workaround outperformed the strategy deck, and whether the thing everyone “felt good about” was dead on arrival. Controlled experiments give teams a scientific way to evaluate ideas, and the results are often humbling enough to challenge a-priori prioritization.

And it compounds.

Each iteration is small. The advantage is not in any single step. The advantage is in doing hundreds of steps while everyone else is still arguing over the first one.

What LLMs actually changed

None of this started with LLMs.

A/B testing, evolutionary search, Bayesian optimization, hyperparameter sweeps, and classic control loops all existed long before today’s models. The basic structure — vary something, measure the result, keep what works — is old.

What’s new is the mutation engine.

Before LLMs, the loop usually broke at the same place: someone still had to invent the next candidate. A human had to write the new headline, redesign the workflow, refactor the code, change the prompt, or specify the next parameter move. In narrower systems, engineers could hard-code that search space — but only for well-defined variables.

LLMs change that.

They can now do the hardest general-purpose step in the loop — propose the next configuration. Not just pick from a menu of preset options, but generate new ones from scratch across messy, semi-structured domains: copy, code, UI flows, sales scripts, SOPs, product specs, even research plans.

Humans still own the high-level work. They choose the objective, define the guardrails, and decide what counts as success. But the expensive cognitive bottleneck — coming up with the next plausible variant, over and over — is no longer fully manual.

And that changes the economics of iteration.

Where this breaks

It does not work everywhere.

If you cannot define a meaningful score, you do not have an optimization problem yet — you have a framing problem.

If feedback arrives six months later, the loop is too slow.

If each test is expensive, risky, or irreversible, brute-force iteration becomes irresponsible.

If the metric is a bad proxy, the system will optimize the proxy and damage the thing you actually care about.

That’s not a flaw in the method. That is the method exposing your real bottleneck.

To sum it up:

Anything that can be scored, safely varied, and rapidly tested can be improved by an autoresearch loop.